2025 AI Predictions (Republished)

Resharing my 2025 Predictions. Originally written January 2025. Republished here so you can hold me accountable.

Foreword (January 2026):

A year ago, I wrote down 12 predictions about where AI would go in 2025. I shared them with teammates at Google Labs but never published them publicly.

That was a mistake. Predictions without accountability are just vibes.

So before I publish my scorecard (tomorrow) and grade each of these predictions against what actually happened, I’m republishing the original document here unedited. You can see exactly what I predicted, in my own words, before I reveal the final ratings.

Here’s what I wrote a year ago:

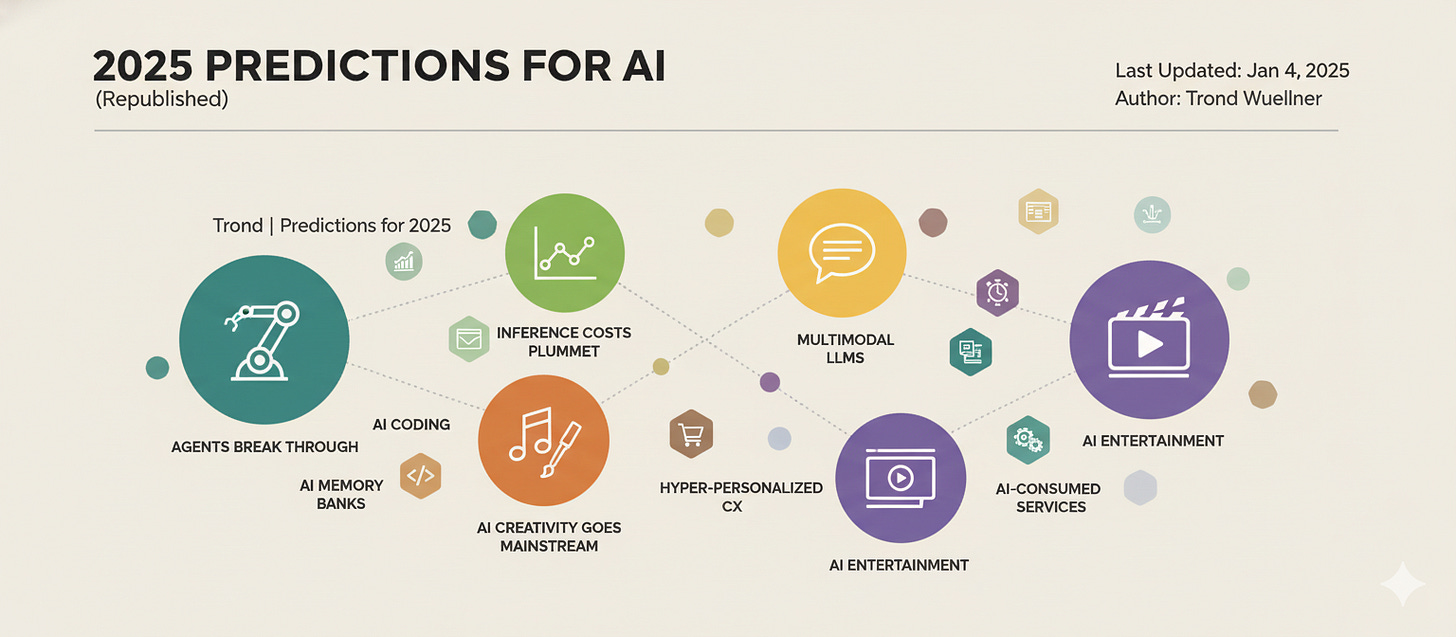

Predictions for AI In 2025

January 2025

If you thought the world of AI moved fast in 2024, you’d better buckle up for 2025 because I believe we’re about to enter a period of development with 50 years of productivity gains crammed into 10 years (or less.) I think we’ll look back at 2025 as the seminal year for AI much like we consider 1984 the pivotal moment for personal computing.

Building on what happened in 2024, AI is going to get even smarter and be everywhere, affecting pretty much everything we do. I won’t address whether we reach AGI, but here are a few thoughts on some trends I expect to see this year:

Inference costs continue to plummet; reasoning-heavy applications flourish

The cost of running AI models has been declining by as much as 10x per year, and this trend is likely to continue in 2025. This is driven by factors such as increased competition, hardware advancements, and more efficient algorithms.

The impact of this trend will be like Moore’s Law on steroids: inference costs plummet, making it feasible to deploy AI for wildly more complex tasks that require advanced reasoning and problem-solving. We’ll be able to build AI systems that can analyze complex data, identify patterns, and generate solutions to problems that were previously impossible.

Put more inference in your solutions.

Agents break through the hype; serving specialized needs but not yet mainstream

AI agents are becoming adept at performing tasks and achieving pre-defined goals without constant intervention. In 2025, we’ll see AI agents gain traction with specialized applications where their ability to automate tasks can be well-scoped in ways that limit unintended outcomes. This could include tasks such as scheduling, managing emails, conducting research, and even automating service interactions.

We’ll see experiments with proactive agents which operate autonomously, with an opportunity to establish a leadership position in this space by differentiating on trust.

Real-time multimodal LLMs evolve how we interact with AI; yet chat remains dominant

In 2025, we’ll witness a significant leap forward in the capabilities of multimodal LLMs, particularly in their ability to process and respond to information in real-time. This means AI systems will be able to seamlessly integrate and understand information from multiple sources, including text, images, audio, voice and even video, all while responding dynamically to changes in the input streams.

The time is ripe to reinvent how we interact with AI and integrate it more seamlessly into applications. Tools like Astra will offer a major step forward, but I worry voice-based interactions will suffer from phone-anxiety trends common among GenZ and Millennials.

Emergence of AI-produced interactive entertainment; a streaming service launches an AI cartoon

AI is already being used to generate creative content, including music, images, and videos. AI algorithms can now generate original stories, characters, and even animation, potentially leading to entirely new forms of entertainment with deeply embedded interactivity that would never be possible without AI.

In 2025, we’ll see a streaming service experiment with an AI-produced cartoon able to respond in near real-time to current events and viewer feedback. This will be a first step toward interactive, personalized and engaging entertainment experiences.

AI-creativity goes mainstream; a new entrant emerges as an AI-first competitor to Adobe

AI-powered creative tools are becoming so good that generated content is becoming difficult to distinguish from human-created work, and users are becoming more comfortable integrating these systems into their regular creative lives.

In 2025, we should expect AI-augmented creativity to explode, with AI tools playing a more prominent role in art, music, advertising and video production. Continued model improvement will lead to new forms of artistic expression and a revolution across the creative world; posing a greater threat than ever before to traditional incumbents.

A new entrant will emerge as an AI-first competitor to Adobe.

AI-music empowers new voices; an AI song will break through on Spotify

AI music tools are empowering individuals with limited musical training to create original music. These tools are becoming more accessible and user-friendly, allowing anyone to create original music regardless of their musical background. This will lead to a democratization of music creation, with new voices and styles emerging from unexpected sources and open opportunities for new methods of creator-centric social music experiences.

In 2025, I believe an AI-generated song will achieve mainstream success on a platform like Spotify, further demonstrating the potential of AI in music creation.

AI-augmented coding gains traction, but complexity prevents widespread use

AI coding tools are becoming increasingly popular, assisting developers with code generation, debugging, and documentation. These tools can automate repetitive coding tasks, suggest code completions, and even generate entire code blocks. However, complex coding tasks still require human expertise and oversight, particularly for deployment as web and mobile apps, limiting the mainstream adoption.

In 2025, we can expect AI coding tools like Jules to become widely used among developers but significant simplification across the software lifecycle is needed before such capabilities can become mainstream tools accessible to non-developer users.

Embodied AI moves beyond novelty; virtual humans transform customer service

Embodied AI, particularly in the form of virtual humans, will find traction in a range of real-world applications. These virtual humans will be more than just avatars; they will be capable of interacting with their environment and humans in a more natural and intuitive way.

We’ll see existing chat-based character systems evolve to include fully interactive virtual humans with photorealistic personification, recognizable voices and body movements. These Embodied AI personas will serve as the presentation layer on top of AI Agents to deliver engaging customer service experiences not before possible.

Hyper-personalized shopping experiences emerge; an AI shopping agent grabs market share

AI is already being used to personalize recommendations, offers, and marketing messages for businesses. In 2025, we can expect even more sophisticated AI-powered shopping tools that anticipate customer needs and provide highly personalized experiences enabled across merchants.

I believe we’ll also see consumers embrace hyper-personalized AI shopping assistants designed to save people money, identify better products based on individualized style, budget, and preferences, and even haggle with sellers to get them the best possible prices. This will empower consumers and potentially disrupt traditional retail models.

AI-generated immersive worlds arrive; an AI game launches with GenWorlds

AI is already being used to generate game content, including characters, environments, and even storylines. In 2025, we can expect to see more sophisticated AI-powered games that offer dynamic and immersive experiences.

Imagine AI that can generate vast interactive and detailed game worlds that adapt to player choices and actions, creating unique and unpredictable gameplay. This will lead to new genres of games where players explore AI-generated worlds, interact with AI-powered characters, and experience emergent narratives that unfold in real-time.

This will revolutionize world design, offering endless possibilities for exploration and replayability. AI will power non-playable characters (NPCs) with complex motivations, relationships, and even personal histories, leading to emergent narratives and unpredictable gameplay.

Services emerge designed to be consumed by AI agents; redefining the web

As AI agents become more prevalent, we can expect to see services and applications specifically designed for AI consumption. This will lead to a new paradigm for the web, where AI agents interact with each other and access information in ways that are different from traditional human-computer interaction.

Imagine AI agents access and process information from websites, APIs, and other online services, autonomously gathering data, making decisions, and completing tasks on behalf of their human users. This will lead to new forms of online services and applications optimized for AI interaction, transforming the way we publish content on the web.

AI memory banks emerge as a new category; always-on memory support gains traction

In 2025, we’ll see the emergence of AI memory banks as a valuable tool for individuals and businesses. These AI-powered systems will act as an “always-on” memory support, capable of storing, organizing, and retrieving vast amounts of information, effectively augmenting human memory and enhancing cognitive capabilities.

Imagine an AI assistant that remembers every conversation you’ve ever had, every document you’ve ever read, and every event you’ve ever experienced. This AI could then provide you with relevant information and insights whenever you need them, helping you make better decisions, learn more effectively, and even enhance your creativity.

What’s Next

That’s what I wrote a year ago. No edits. No hedging after the fact.

Next post: the scorecard. I’m grading each of these predictions against what actually happened in 2025. Some I nailed. Some I whiffed. A few things happened that I didn’t see coming at all.

Subscribe so you don’t miss it.

Originally written January 2025. Republished January 2026.