2026 is the year of Just-in-Time UX

I wildly underestimated how fast we'd get here.

Earlier this year I wrote that 2026 would be the year AI learned to compose UIs in real-time. Users watching interfaces assemble live. Interactive components with full state. Agents drawing UI on the fly specifically to solve your precise need.

Looks like I nailed the prediction, but underestimated the speed and the weirdness.

By May 2026—literally right now—an entire ecosystem is crystallizing around this notion. A2UI. Shadify. AG-UI. And a bunch of others I’m still discovering. All shipping real-time, generative UIs. Software is about to get rad!

My Original Prediction

Here’s what I actually wrote back in January (from “12 AI Predictions for 2026”):

Chat has been the predominant interface for AI. There are interesting examples of linking useful UI components into a chat interface. In November 2025, Anthropic and OpenAI partnered to release the MCP Apps Extension—a specification that brings standardized interactive UI capabilities to the Model Context Protocol.

This will lead to a new class of apps that self-compose UIs in real-time. They’ll draw components for existing patterns from vast libraries of capabilities, but when a novel need arises, they’ll create bespoke experiences to match real-time user requests. The very definition of software will forever change, and 2026 is when we see the beginnings of this evolution.

So I was thinking: MCP standardizes things → builders adopt → apps ship interactive UX. A nice sequential story. But that’s not quite how it went.

A lot started unifying when Google shipped A2UI. It happened before there was consensus we needed it. Builders started using it without waiting for the ecosystem to debate. The entire thing organized backward—not “let’s standardize first, then build,” but “let’s build, and the standard will emerge from what works.” I kind of think that trend is here to stay.

Vibe coding might play a role, but that’s not all of it. We’re all moving too fast for official standards to keep up. Standards organizations are going to need to stay on their toes. Or maybe it’s already too late. Yeah, they’re probably more historians than futurists now.

Real-Time UX is Shipping Now

Before A2UI, this space was a mess. Everyone building generative UI was inventing their own protocol. Custom WebSockets. Ad-hoc JSON schemas. No way for an agent trained on one platform to work on another.

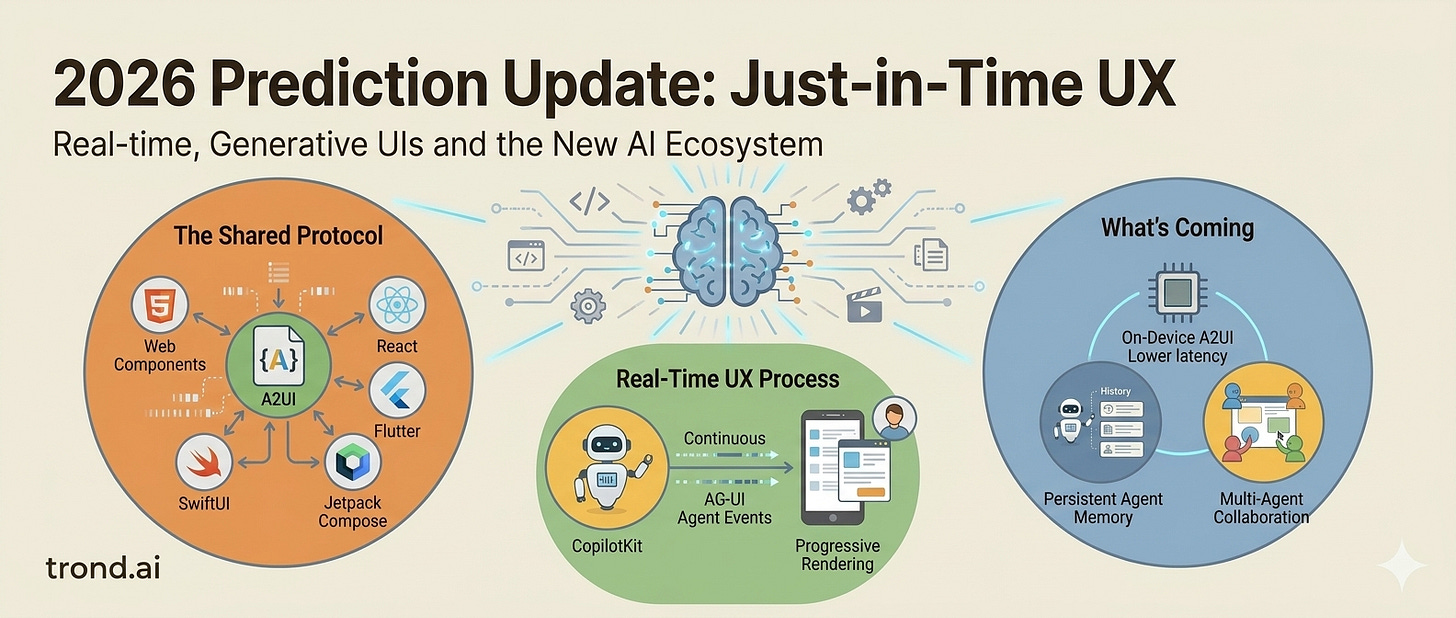

A2UI is solving that. It’s boring on purpose—just a declarative way for agents to describe UIs. Not code. Not instructions. Just “here’s what this UI looks like.” JSON you can stream, component by component, platform by platform. This is key because now:

Web Components, Angular, React, Flutter, SwiftUI, Jetpack Compose can all understand the same language

One agent can work everywhere

It requires no translation and no glue code.

It’s all pulled together via progressive rendering. As the agent thinks and generates components, the client renders them live. You’re not stuck watching a spinner. You’re watching the UI assemble. Components appearing. Lists populating. Forms showing up. All while the agent is still thinking.

That’s becoming the default UI paradigm. 2026 isn’t just the year where UI is generated in real time. 2026 is the year of generative UI.

Let’s talk about a few of the big things driving this space:

Google A2UI: The Shared Protocol

Google shipped A2UI. Agent-to-User Interface. It’s deceptively simple.

Instead of agents generating code—React components, HTML, Flutter widgets—they generate a flat JSON description of what the UI should look like. That’s it. One agent, any platform.

Version 0.9 shipped in April 2026. Multiple renderers are already shipping stable versions. This should have taken 18 months to standardize. It took 6.

Shadify: Real time UI component system

Describe a UI in plain English. Get a live, interactive shadcn/ui page back. Export it as clean React code. This makes it really easy to deliver a consistent UI because the agent isn’t inventing new components, it’s remixing what already exists. But the remix is custom and it’s specific to your request. And it all happens in realtime -- which is amazing. And it’s open source under an MIT license which I like.

CopilotKit: Making Generative UI the Default

CopilotKit has been on the Generative UI train for awhile and is building some of the most interesting capabilities with it. They can help an Agent generate a stargazing app with live sky compass, 3D solar system, interactive dashboards with charts. All in real-time, in response to requests. It’s pretty amazing.

This isn’t a one-off demo. It’s a framework thousands of developers are using to compose UIs on the fly. This space is moving so fast, we already have framework companies gaining traction:

AG-UI Protocol: Streaming Agent Events in Real-Time

AG-UI is an open, lightweight, event-based protocol that standardizes how AI agents connect to user-facing applications. It standardizes how agent state, UI intents, and user interactions flow between your model/agent runtime and user-facing frontend applications.

This allows agent interactions to feel more real-time because instead of waiting for a complete response, you get play-by-play updates:

“Agent is analyzing...” → tokens start flowing → “Generating content...” → UI updates as events arrive.

Combined with A2UI, this creates a full stack: agents think out loud, UIs render incrementally, users experience live feedback instead of spinners. It’s a key part of how realtime generative UI is beginning to win.

Why This Happened in 6 Months (Not 12)

A2UI standardized what was scattered. Before: WebSockets, custom protocols, one-off implementations. After: One open protocol, multiple renderers ready, agents speaking the same language.

The ecosystem coalesced simultaneously. MCP + A2UI + AG-UI = A complete stack. Not pieces in sequence—a system shipping all at once.

Incentives aligned perfectly. Developers hated code → compile → deploy. Streaming eliminates it. Agents eliminate manual state changes.

Economics improved. Streaming is cheaper than batch. Partial results matter before the full response arrives. Agents calling tools directly mean fewer context switches and lower costs.

I predicted: Self-composing UIs. Bespoke experiences. Real-time composition. All of that landed and more. Today, you’re not asking the AI to write code. You’re delegating state changes to an agent that speaks your app’s language. The UI is just the visualization layer.

What’s Coming

The shift from “chat interface” to “standardized protocols for agent-composed, real-time, voice-driven state manipulation” happened in 6 months instead of 12. And it’s going to accelerate from here. I see more on the horizon:

On-device A2UI. All of this currently assumes live agents in the cloud. Imagine agents running locally, rendering UIs via A2UI to your device. Lower latency. Privacy. No API dependency.

Persistent agent memory in UX. Right now UIs are stateless across sessions. What happens when your agent remembers your interaction patterns, preferences, and context, and adapts the interface itself? The UI becomes genuinely personal.

Multi-agent, shared-state collaboration. Multiple agents, multiple users, one shared canvas. Teams collaborating through agent-composed interfaces. No more meetings about how to format the data—the agent generates the interface.

Accessibility baked in by default. Voice + state is already 10x more accessible than clicking. The next step: Agents that automatically generate semantic HTML, alt text, keyboard navigation, and ARIA as they compose. Accessibility isn’t an afterthought—it’s in the protocol.

Agents in your existing tools. A2UI renderers shipping inside enterprise software. Slack. Figma. Notion. Your existing tools suddenly have agent-driven, real-time UI generation built in. The line between “app” and “agent” disappears.

The definition of software hasn’t changed yet. But the friction is gone. By end of 2026, “real-time generative UI with A2UI” will be as normal as “responsive design” was 10 years ago. The developers who ship voice-driven agents that manipulate state directly win. The platforms that make this the default layer—not an add-on—own the next era.

If you’re building tools, this isn’t “the moment.” This is accelerating. The infrastructure exists. The pattern is proven. The ecosystem is moving. The question is simple: Are you shipping voice-driven agents that manipulate state in real-time, or are you still asking users to click?

Because by the time you finish reading this, someone shipped something new that’s doing exactly that.

Prefer an audio overview? I got you: