I Made 12 AI Predictions Last Year. Here’s My Honest Scorecard.

A year ago, I wrote down what I thought would happen in AI. Time to see if I earned it.

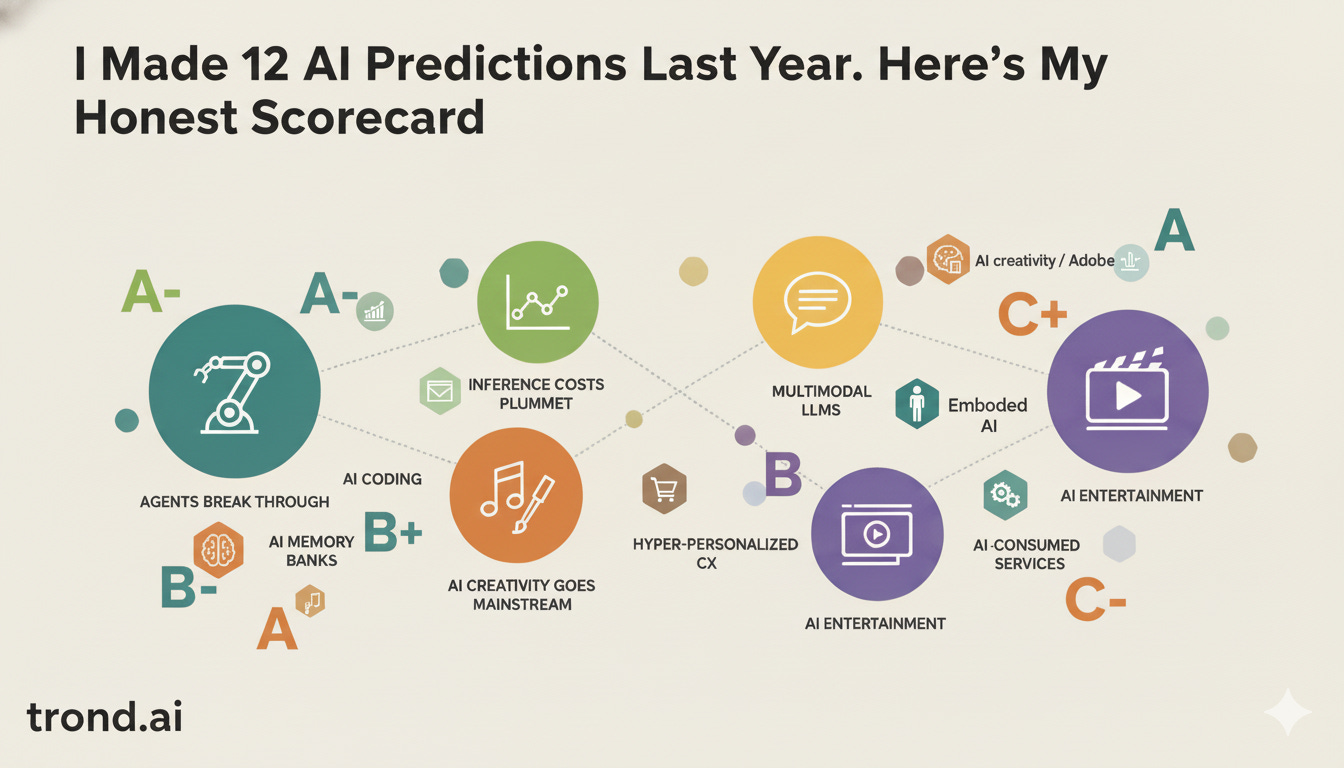

TL;DR: 4 A’s, 3 B’s, 3 C’s. Overall: B+. I nailed inference costs, AI agents, AI music, and services-for-AI. I whiffed on embodied AI and AI game worlds—the tech is there but the products aren’t. Biggest lesson: technology moves fast, but products take longer.

A year ago I wrote down 12 predictions and hit publish. I reposted them yesterday, and you can read them here: 2025 AI Predictions (Republished)

That’s the crazy part about predictions; you can’t edit them later. They just sit there, waiting to make you look smart or foolish. I called 2025 the potential “1984 moment” for AI. Predicted 50 years of productivity gains crammed into 10. Bold claims.

Some held up. Some didn’t. A few things happened that I missed entirely. I’m grading myself honestly. If you only remember your hits, you learn nothing. I’ve already started writing my 2026 predictions, so this is all part of the process for me. I write to think and build to learn.

The Hits

1. Inference Costs Continue to Plummet (A)

What I predicted: Costs declining 10x per year, enabling “reasoning-heavy applications” that were previously impossible.

What happened: Nailed it. Stanford’s 2025 AI Index shows inference costs for GPT-3.5-level performance dropped 280x and kept falling. Epoch AI found price drops from 9x to 900x depending on the benchmark. It started early in the year when DeepSeek rattled markets by offering R1 at 20-50x cheaper than comparable OpenAI models. That turned into a performance war with API costs dropping to fractions of a cent per million tokens.

More importantly, this enabled exactly what I predicted: chain-of-thought, multi-step agents, and complex agentic workflows at economically viable scale.

Grade: A — The magnitude was arguably understated.

Why this matters to me: I work on AI products at Google Labs. A year ago, some of the experiences we wanted to build were economically impossible. Now they’re not. That 280x drop isn’t an abstract number—it’s the difference between “interesting demo” and “scalable product.”

2. AI Agents Break Through the Hype (A-)

What I predicted: Agents gain traction in “specialized applications where their ability to automate tasks can be well-scoped.”

What happened: 2025 really was the dawn of the agent.

OpenAI kicked it off with their launch of Operator, an AI that browses the web, fills forms, and completes purchases on your behalf. Anthropic followed with Claude Computer Use that let AI control your mouse and keyboard. We launched Mariner at Google Labs and by mid-year, agentic browsers from Perplexity (Comet), Browser Company (Dia), and Opera (Neon) reframed the browser as an active participant. Agents are core parts of Claude Code, Antigravity, Cursor and so many other systems people use every day.

Even enterprise adoption exploded: 68% of large companies now use AI agents (up from 11% two quarters prior) and the market is projected to grow from $13.8 billion to $140.8 billion by 2032. Despite the now debunked MIT survey about AI projects failing in the enterprise, the application of Agents at work truly started to take hold in 2025.

But here’s the nuance I got right: these agents thrived in well-scoped applications. The OSWorld benchmark shows humans at 72.4% accuracy versus 38.1% for OpenAI’s best model. Agents are powerful but not yet fully autonomous. The “specialized needs” framing I predicted still largely holds. I think this expands dramatically in 2026 and we’re going to see agents move into swarms of agents, teams of agents and much more well coordinated workflows with tool using agents performing a massively large variety of tasks.

Grade: A- — Timing and trajectory is largely right. “Mainstream” is generous, but defensible.

3. AI Music Empowers New Voices; An AI Song Breaks Through (A)

What I predicted: “An AI-generated song will achieve mainstream success on a platform like Spotify.”

What happened: Breaking Rust hit #1 on Billboard’s Country Digital Song Sales chart with “Walk My Walk.” The AI-generated country artist has 2 million monthly listeners on Spotify.

But that wasn’t alone. The Velvet Sundown hit #1 on Spotify’s Viral 50 in the UK, Norway, and Sweden. Xania Monet, an AI persona created by a Mississippi poet, signed a multi-million dollar record deal. Deezer reported 50,000 fully AI-generated songs are uploaded daily. Spotify removed 75 million “spammy” AI tracks in 12 months, showing both the interest and the challenge here. The floodgates have opened and AI music is almost certainly here to stay.

That’s not to say there isn’t backlash. Artists are hesitant about what this all means for their work and we’ve seen Suno, Udio and major record labels go through some things together. There are really important copyright issues at stake and I’m proud of the stance we’ve taken at Google on this topic. I’m not going to get into details, but I think Lyria strikes a good balance here.

Back to the grades. I predicted a song would break through. Multiple artists hit the charts. I was too conservative, and now that the gates are open I’m more excited than ever for what 2026 will bring.

Grade: A

4. Services Emerge Designed for AI Agents (A)

What I predicted: “Services and applications specifically designed for AI consumption... transforming the way we publish content on the web.”

What happened: The Model Context Protocol took the world by storm.

Anthropic launched MCP in November 2024 as an open standard for connecting AI to external systems. By 2025, it became the de facto infrastructure for agentic AI. OpenAI adopted it in March. Google DeepMind followed. In December, Anthropic donated MCP to the Linux Foundation’s new Agentic AI Foundation, co-founded with OpenAI and Block.

MCP is now integrated into ChatGPT, Cursor, Gemini, Microsoft Copilot, and VS Code. AWS, Google Cloud, and Azure all support it. Thousands of MCP servers exist for enterprise systems and hobbist creators. Designing for AI Agent use is more important than ever.

Similarly, OpenAI released AGENTS.md as a standard for giving AI agents project-specific guidance and it’s now been adopted by 60,000+ open source projects already. If you use Antigravity, Claude Code, Codex, Lovable, or Cursor, you’re almost certainly using an AGENTS.md file as part of your workflow.

This prediction was more right than I understood when I wrote it.

Grade: A

Builder’s note: MCP is the kind of infrastructure that seems obvious in retrospect but required someone to just... build it. Anthropic shipped it, open-sourced it, then donated it to a foundation. That’s how you win an ecosystem. Kudos.

5. AI-Augmented Coding Gains Traction (B+)

What I predicted: Tools like Jules become “widely used among developers” but “significant simplification across the software lifecycle is needed before such capabilities can become mainstream tools accessible to non-developer users.”

What happened: Some places are reporting that as much as 90% of dev teams now use AI in their workflows (up from 61%). GitHub Copilot leads with 42% market share; Cursor is at 18%. Almost half of companies now have at least 50% AI-generated code. And if you’ve been on X over the last few weeks, you’d be forgiven if you thought 100% of people were making projects over the holidays with Claude Code.

Cursor claims that users complete complex tasks 40-60% faster when using their tools. Claude Code, Windsurf, and GPT-5’s Codex agent all shipped major improvements. Google acqui-hired (what do we call these things?) the Windsurf founders and then launched Antigravity. The space is hotter than ever and “Vibe coding” entered the vocabulary.

But here’s where I was both right and wrong: I said non-developers would need “significant simplification” before these tools became accessible. That simplification did sort of happen; but the mainstream crossover for non-developers is still incomplete. The barrier lowered but there’s still a lot to do here. Look out for my 2026 predictions about JIT UX for more here.

Grade: B+ — Developer adoption exceeded expectations. Non-developer crossover remains a work in progress.

6. Hyper-Personalized Shopping Experiences Emerge (B+)

What I predicted: “AI shopping assistants designed to save people money, identify better products based on individualized style, budget, and preferences, and even haggle with sellers.”

What happened: Every major AI company launched shopping features:

Perplexity rolled out Instant Buy with PayPal integration—ask “what’s the best winter jacket if I live in San Francisco and take a ferry to work?” and it remembers your context

OpenAI launched Shopping Research with product cards and personalized guides

Amazon upgraded Rufus and tested “Buy For Me”—an agent that purchases from other sites within the Amazon app

Google launched Doppl (one of my projects) and integrated Try On You features across the Shopping ecosystem. Nano Banana really raised the state of the art for these features this year.

Startups like Doji and Phia are getting close to some of these capabilities, particularly in terms of price hunting but aren’t quite where I imagined yet.

Morgan Stanley projects AI agents could add $115 billion in U.S. e-commerce spending by 2030. Adobe says AI-assisted shopping grew 520% this holiday season. But the “haggle with sellers” bit? Not quite there yet. And the experience is fragmented—Amazon sued Perplexity for its Comet browser completing purchases without permission. This space will stay hot in 2026 and I’m pretty excited about how AI Agents are going to help us engage with commerce in the future.

Grade: B+ — Directionally correct. “Haggle” was ahead of its time.

Partial Credit

7. AI Streaming Cartoon Launches (B)

What I predicted: “A streaming service experiment with an AI-produced cartoon able to respond in near real-time to current events and viewer feedback.”

What happened: Close, but not quite there yet.

Fable launched Showrunner, the “Netflix of AI”—an interactive platform where you create episodes using text prompts. Amazon backed it. Their “Exit Valley” show features AI versions of Sam Altman and Elon Musk. It’s probably the closest to what I imagined when I wrote the prediction, but it’s still falls short of where I think this is going.

The big headline this year is that Disney announced Sora will create videos featuring 200+ Disney characters, including distribution of user-made clips on Disney+. Disney invested $1 billion in OpenAI to get this deal done. Similarly, Netflix is developing interactive voting for its Star Search reboot which is an interesting puzzle piece to keep an eye on.

The industry is clearly moving toward interactive, AI-generated content. But a full AI cartoon responding to real-time events? Not quite yet. The pieces are there; but the product isn’t.

Grade: B — The infrastructure is here, but the consumer product I described is 2026.

8. AI Memory Banks Emerge as a New Category (B-)

What I predicted: “AI memory banks as a valuable tool... an ‘always-on’ memory support, capable of storing, organizing, and retrieving vast amounts of information.”

What happened: The category emerged and a lot of people are building parts of this, but it’s not where I predicted.

I think the biggest headline was when Limitless launched its $99 Pendant wearable. It could record conversations, get AI transcripts, ask “what did we decide?” and jump to exact quotes. The product found an audience, and then Meta acquired Limitless in December, immediately halting Pendant sales. Meta folded the technology into its “personal superintelligence” roadmap for future Ray-Ban glasses.

Microsoft’s Recall feature generated controversy but showed the category has legs. A million note taking apps, tools, and features are flooding our workflows but none are quite what I meant. OpenAI is rumored to be working on a “pen” and I suspect it’s close to what I imagine taking hold here. I guess we’ll see.

Grade: B- — I think the category is validated but we still need to see the killer product.

9. AI-Creativity Goes Mainstream; Adobe Faces Competition (B-)

What I predicted: “AI-augmented creativity to explode... A new entrant will emerge as an AI-first competitor to Adobe.”

What happened: AI creativity absolutely exploded. Flow, Sora, Runway, Kling, and Pika pushed video generation into the mainstream as capabilities leaped forward. Every major model improved dramatically at image generation and Nano Banana set a new standard that somehow Nano Banana Pro leap frogged. You can one-shot a complex infographic, correctly solve complex math within beautifully rendered text, and even create full presentations based on your source materials. Absolutely monumental progress this year.

But a clear Adobe challenger? Not yet. Canva deepened AI integration. Figma pushed AI features. Startups like Ideogram and Leonardo.ai gained tons of users. But none really emerged as the Adobe killer. And Adobe fought back harder than I expected. Firefly is embedded across Creative Cloud. GenAI capabilities from partners are being directly integrated across the Adobe portfolio. They just signed a big deal with Runway. Adobe is awake and they’re cooking again.

Grade: B- — Creativity explosion definitely happened, and there is a lot of new competition but Adobe is not asleep at the wheel which is exciting to see. As a photographer, I’ve been a loyal Adobe Creative Suite subscriber and am excited about the new capabilities at my fingertips.

10. Real-Time Multimodal LLMs Evolve Interaction (C+)

What I predicted: “AI systems will be able to seamlessly integrate and understand information from multiple sources, including text, images, audio, voice and even video, all while responding dynamically.”

What happened: The technology arrived. GPT-4o, Gemini 3.0, and Claude all improved multimodal capabilities dramatically. Real-time voice with AI became the de-facto standard for many users.

But my concern about “phone-anxiety trends among GenZ and Millennials” limiting voice adoption? Anecdotally maybe thats correct, but it’s still really hard to know. Chat definitely remains the dominant interface for most everyone despite massive voice improvements. I don’t know many people who actually use the video input paradigm yet.

I still love the idea of Astra, and I’ve seen some cool use cases that integrate Gemini Live, but so far it hasn’t redefined interaction models. We’re still mostly typing.

Grade: C+ — Multimodal models are definitely here; but behaviors change slowly. We’re all still chatting with our AIs.

The Misses

11. Embodied AI / Virtual Humans Transform Customer Service (C)

What I predicted: “Fully interactive virtual humans with photorealistic personification, recognizable voices and body movements... as the presentation layer on top of AI Agents.”

What happened: Progress, but nothing transformative here yet.

AI avatars are improving quickly. Synthesia and HeyGen refined video generation with talking heads. But photorealistic virtual humans serving as widespread customer service agents? That hasn’t gotten past janky demos yet. The dream Poe from Altered Carbon serving as a virtual concierge when I check into my next hotel is still out on the horizon I’m afraid.

Most customer service AI remains voice-based or chatbot-based. The “embodied” layer is what isn’t ready yet. Cost, uncanny valley, and customer preference for efficiency over novelty kept this prediction from materializing in 2025. We’ll need some technology breakthroughs and some carefully designed experiences for this to come to life in 2026. I’m convinced it will, it’s just a matter of when.

Grade: C — Overestimated progress and demand for virtual human avatars.

12. AI-Generated Immersive Game Worlds Launch (C-)

What I predicted: “An AI Game launches with GenWorlds... AI that can generate vast interactive and detailed game worlds that adapt to player choices.”

What happened: AI in games advanced pretty incrementally in 2025. We say clear NPC dialogue improvements, a ton of procedural and generated content experiments, quite a few clever AI development tools, and MCP servers for pretty much every game engine. We announced Genie 3 from Deep Mind this summer and a range of startups began showing off 3D Gaussian Splat based environments that you can explore in a video-game like experience. But none of these advancements really hit the mark for what I had in mind.

We’re still looking for the first AI-generated game world launched as a mainstream title. There’s a lot of energy in this space right now, but the “GenWorlds” moment (meaning a game where AI generates the explorable world in real-time) didn’t hit the market in 2025.

Game development cycles are long and the technology is almost there. 2026 should be interesting.

Grade: C- — Too early.

What I Missed Entirely

Some of the things that seem obvious now are where I missed the most in 2025. A few big ones that stand out to me are:

DeepSeek’s disruption. A Chinese lab releasing a competitive model at a fraction of the cost—open-weight—rattled markets and changed the game right out of the gates in 2025. I predicted cost declines but not where the disruption would come from nor how much we’d see. I called the trend Moore’s Law on steroids when really it was more like a coked out meth-head on steroids.

The MCP standardization speed. I predicted “services designed for AI agents” but didn’t foresee how quickly formal protocols would emerge and get adopted by all major players. The industry standardized faster than expected. This is one of the more exciting spots of coordination in the industry right now and I find it really encouraging.

The acquisition pattern. I predicted AI memory banks would emerge; I didn’t predict these startups would get snapped up before they scaled. Actually, this whole new trend of buying out founders that we’ve seen with Character.ai, Windsurf and most recently Groq is an interesting evolution to the M&A playbook. I definitely didn’t see this coming.

Regulatory acceleration. The EU AI Act also shaped the industry in ways I should probably have thought about. But regulatory stuff is kind of out of my wheelhouse, so I’m giving myself a pass here.

The Scorecard

PredictionGradeInference costs plummetAAgents break throughA-AI music breakthroughAServices for AI agentsAAI coding gains tractionB+Personalized shoppingB+AI streaming cartoonBAI memory banksB-AI creativity / AdobeB-Multimodal interactionC+Embodied AICAI game worldsC-

Overall: B+

I think I got many of the big trends right: agents, inference economics, AI music, services for AI, and coding tools. Where I missed, was mostly timing. Embodied AI and AI game worlds are coming and the tech will be here before we know it, but it just didn’t happen in 2025.

The biggest lesson: Technology is moving extremely fast right now, but products are taking longer. There’s probably something to learn for PMs here that would make for an interesting article. MCP was infrastructure I didn’t see coming (but probably should have). The AI streaming cartoon I described is definitely being built right now by someone, but it didn’t ship in 2025. AI game worlds need more development cycles to be product ready.

The gap between demo and product is still where I think all the interesting work happens. Good thing it’s what I work on every day!

What’s Next

2026 predictions drop next week. Here’s the short version: I think this is the year agents stop being demos and start being daily tools. The infrastructure is in place. The battle now is trust.

I’ll break down what I’m betting on—and what I’m building toward at Google Labs.

Think I graded myself too generously? Too harshly? Hit reply—I read everything.